Marina Walker Guevara speaks about AI at the GIJC in Sweden. Image: Smaranda Tolosano for GIJN

Investigating — And Embracing — the AI Revolution

Read this article in

AI might become the most transformative tool in journalism’s history, changing the way newsrooms — and their products — work.

But while AI can boost research capabilities and open new avenues for investigative journalism, historical biases in data can introduce systemic flaws in algorithms used by AI technology. Governments and corporations can — and do — employ AI in ways that can repress citizens or widen social inequalities. As AI’s game-changing tools become part of our technological infrastructure, it will become less visible, but more pervasive.

A day of AI-oriented events at the 13th Global Investigative Journalism Conference (#GIJC23) discussed how AI can help newsrooms do their jobs, how investigations have revealed the harmful impact of AI technology on communities — and how journalists should approach a topic often framed by narratives of either hype or despair. Speakers also shared tips and best practices and tips for using and investigating AI.

Using AI to Build Better Stories

Just as investigative journalism shifted from the “lone wolf” reporting style to the cross-border collaborative projects that produced pieces like the Panama Papers and the Paradise Papers, journalists can now use AI to tackle stories that would have been too challenging in the past.

“Going forward, to understand AI, to investigate AI, and to co-opt AI for good is our mission,” said Marina Walker Guevara, executive director of the Pulitzer Center on Crisis Reporting, a GIJN member, during her talk “What Does AI Have to Do with Investigative Journalism? Everything!”

“We’re going to need some of the same ingenuity, the same radical sharing mentality, and thinking-outside-of the-box approach that fueled all of us when we went from lone wolves to collaborators,” Guevara added.

AI is often designed by large tech companies without the most vulnerable people in mind, and there is very little government regulation. These combined dangers mean that it is imperative to investigate how it’s used. “Probing AI is every investigative reporter’s unavoidable responsibility at this time,” said Walker.

She called on journalists to shake off the fear of AI and realize that it is much more an accountability and political story than it is a business or tech story. Walker also pointed out that AI can help investigative journalists find patterns in the chaos of data that can be interpreted to produce stories.

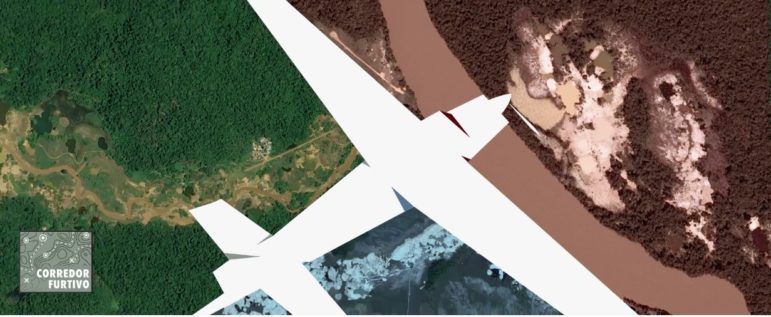

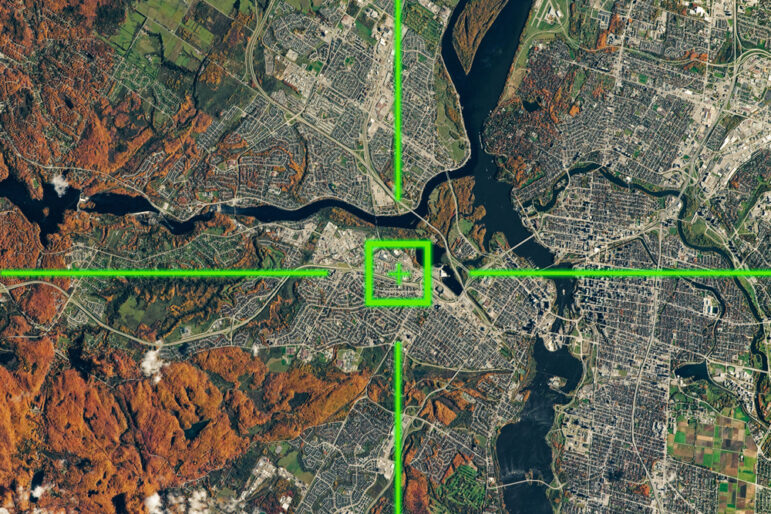

As an example, she cited the unveiling of more than 3,000 illegal mines in the Amazon rainforest, by a team at Armando.Info, a GIJN member, led by Joseph Poliszuk. By using AI technology to make sense of satellite data, crossed with databases on organized crime, Armando.info produced the most detailed picture to date of illegal mining in the Amazon. (This story also made the Global Shining Light Award shortlist.)

How Small Newsrooms Can Use AI

In a talk titled “How AI Can Save Small Newsrooms,” Rune Ytreberg, editor for the Norwegian outlet iTromsø, shared his newsroom’s experiences of designing AI tools for data journalism stories.

A newsroom that can most effectively use AI is made up of a group of experts in different fields, he observed: “I think you need a developer who can do database design architecture. Then you need a data analyst,” he said. “I would want that data analyst to have a social science background, and to program. I would also need a designer to make a user interface, one developer that understands natural language programming, and data journalists [who would write the stories],” said Ytreberg.

At iTromsø, AI developers and data journalists are constantly developing new tools, and bouncing ideas off each other to produce innovative stories. Their newsroom designed an AI tool named “L Ai La” which constantly retrieves data, analyzes it, and sends news alerts and recommendations to journalists about content that might be interesting for stories.

Probing AI’s Invisible Infrastructure

Garance Burke, global investigative journalist with the Associated Press (AP), leads a team investigating the impact of AI technology on communities.

In her talk, “AI: Perils and Promise,” she noted that “what’s also interesting about this field is that it forces you to go down these rabbit holes to understand previous policies and how they may have influenced those datasets.”

Burke explained how she and Sally Ho researched a piece about an algorithm used by child welfare officials to determine which families should be investigated. In US states such as Oregon, Pennsylvania, California, and Colorado, the algorithm generated racial disparities in the welfare system because of a historical bias against African-American communities in the data collection process.

Garance Burke, a global investigative journalist with The Associated Press. Image: Edvin Lundqvist for GIJN

Biased data fed into an algorithm used for policy design produced a biased policy. Following AP’s investigation, Oregon dropped its use of the AI and the US Justice Department opened a civil rights investigation into the algorithm’s use.

Burke and Ytreberg also shared some practical advice for newsrooms both using and investigating AI.

Tips for Journalists Using AI

- Consult the AP’s standards around generative AI and the chapter on AI in the 56th edition of the AP Stylebook.

- One of the first uses of AI, which is relatively simple, is for digitizing research, which can also support further uses of AI technology.

- Use data journalism to produce your own data in house, because this will be a key foundation of the AI you use.

- When conducting FOIA requests, ask for wide time frames (at least 10 years) so you have a wider dataset to detect changes.

- Use AI to do efficient analysis of data that can later be used to strengthen citizens’ information about their communities.

Tips for Journalists Investigating AI

Burke suggested these five tips for journalists:

- Investigate who made the decisions on building/deploying the models.

- Focus on people impacted by technology.

- Research the applicable laws and regulations, if any exist.

- Look for disparate impacts in our communities and how those impacts relate to historical racial biases.

- Seek stories, issues, and applications that transcend the individual, highlight accountability, and global connections.

For journalists searching for answers on how to approach AI, Burke suggests moving on from the global “hype-cycle” about AI and the “doom-loop cycles of despair” about robots taking away our jobs. “We’re trying to get beyond that and just go back to journalistic basics and ask questions about how these systems work.”