Image: GIJN

Tips to Guide Investigative Journalists in Document Text Analysis

Read this article in

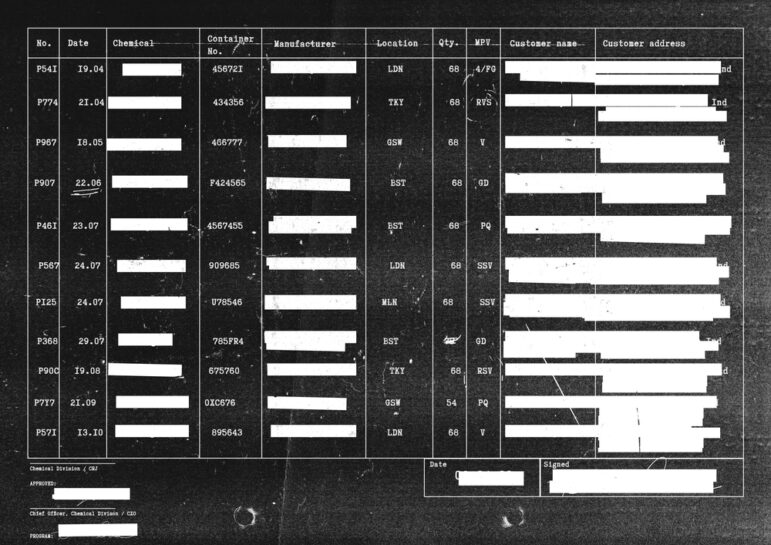

Investigative journalists often face the challenge of reviewing and combining large documents or data in text forms. This can be very exhausting and labor intensive.

Ideally, data is accessible in a friendly format — such as spreadsheet, CSV, or a JSON file — for easy analysis. But many times, data is stuck in hard to extract sources like PDFs, emails, articles, and social network posts, noted Fernanda Aguirre, a data analyst at The Examination, a nonprofit newsroom that investigates preventable health threats, and Data Critica, a data journalism organization with a focus in Latin America.

“These kinds of documents are not as intuitive to analyze as they are when they come in a structured way,” Aguirre explained during a session at the13th Global Investigative Journalism Conference (#GIJC23). “[This is] when we are dealing with what is known as unstructured data specifically in text-based form.”

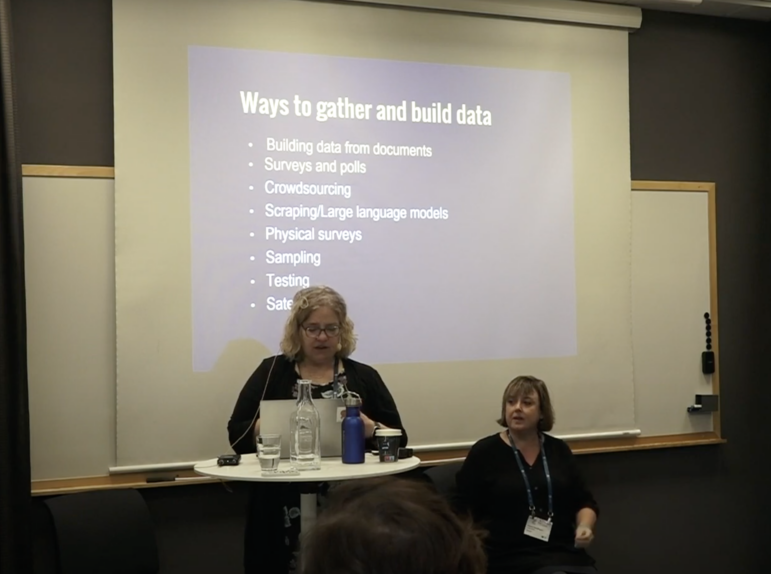

At GIJC23, Aguirre emphasized that it is easier to analyze structured data than information in text form. This is because structured data, she pointed out, allows journalists to easily manipulate it, by applying arithmetic operations, counting categories, and calculating percentages or rates of change.

To get the data into this easily usable state, Aguirre said it must be converted into something measurable. “This is done simply by extracting insights that point us in the direction we need to investigate further,” she said, noting that “the purpose of analyzing documents with text is to extract useful information from them.”

This process can be daunting, however, so Aguirre offered tips to guide investigative journalists through the process.

Ask Questions

To analyze any document, Aguirre said the first step should be for journalists to ask a series of relevant questions, just as they would do with any other source of information. Depending on the document, the questions could reveal names of those involved, location, or day of events. This initial stage is all about establishing a baseline of facts and, possibly, a chronology of events.

Process the Text

During the next stage of analysis, Aguirre said it is important to remove “stop words” — terms that don’t contain semantic content. She adds that these kinds of words, which include articles and conjunctions, are widely used, but they carry no real information or meaning. While there is no official list, Aguirre said each list of stop words will vary according to the language.

Consider Natural Language Processing

Sometimes, text analysis can be complex. In situations like these, Aguirre recommended the use of Natural Language Processing (NLP), a type of AI language learning model that helps computers understand the way humans write and speak. She highlighted a few handy NLP techniques to use in analyzing documents.

- Named Entity Recognition (NER): This technique allows the extraction of different kinds of entities in the documents. Aguirre said this technique is useful to understand what a document is talking about, and in the classification of named entities into predefined categories such as names, places, nationalities, religious or political groups, organizations and companies, agencies and institutions, and locations.

- Topic Modeling: This technique helps to analyze and identify groups of similar words within a text body. “I like to think of topic modeling not only as a technique to analyze your data, but also to organize,” Aguirre said, adding that algorithms such as LSA, LDA, BERTopic, Top2Vec can be used with this technique. Kurtis Pykes, a data scientist, also explained topic modeling as a type of statistical modeling that analyzes and identifies groups of similar words within a text body. This approach is used to scan documents, detect word and phrase patterns within the documents, and produce or group similar words into topics. Topic modeling is used to discover and identify hidden topics within a set of text documents.

- DocumentCloud: This is a popular, open source software program that allows journalists to upload, organize, analyze, annotate, and publish source documents to the open web. Gumshoe is one of the tools recently integrated with the DocumentCloud platform. This AI tool is aimed at addressing the challenge journalists and newsrooms face in reviewing, for instance, massive documents received as a result of Freedom of Information requests.

- Hugging Face: A recent article published in MUO explained that Hugging Face is an open source platform that provides tools and resources for working on language and computer vision projects. (It has both free and paid tiers.) In essence, Aguirre said Hugging Face helps users “find very specific modules available for a wide variety of languages.” Additionally, Hugging Face is a data science platform that enables users to build, train, and deploy machine learning models. Besides being a data science platform, Hugging Face acts as a hub or community where AI experts, machine learning engineers, and data scientists come together to share ideas and get necessary support.

Collaborate

The process of analyzing documents sometimes requires technical skills that journalists may not have. In situations like this, Aguirre urged journalists to collaborate with academia or other newsrooms that have data teams or even programmers to help them deal with complex investigations, and extract the different kinds of entities in documents.

Aguirre said that collaboration “is like a great way to deal with complex investigations regarding documents,” especially for journalists who don’t do programming. She added that journalists don’t have to read several pages before analyzing a document, but can easily use NLP techniques to go to specific pages within the documents and extract needed information.

These techniques help to find out where to look for [specific] information [in the documents], explained Aguirre.

Watch the full GIJC23 panel on Text Analysis for Investigative Reporting.