The Twitterverse Of Donald Trump in 26,234 Tweets

We wanted to get a better idea of where President-elect Donald Trump gets his information. So we analyzed everything he has tweeted since he launched his campaign to take a look at the links he has shared and the news sources they came from.

Getting the Data

To do this kind of data analysis, we needed an archive of @realDonaldTrump tweets. We started by scraping his feed using Twitter’s API, but Twitter limits the scraping of tweets to roughly 3240 at a time, which represents less than half of his account’s output since he launched his presidential run.

We were able to procure a fuller corpus from developer Brendan Brown’s Trump Twitter Archive, who had found a nifty workaround for that problem by scraping tweets for set time frames and adding them up in the end. Brown’s data, available as csv or json, only went to November 9th, 2016. We completed his data set to Nov 17, 2016 by scraping the remaining tweets directly.

Here’s how:

- Start by getting developer oauth credentials from Twitter: https://apps.twitter.com/

- If you don’t already have Python installed, start by getting Python up and running. Also, if you don’t already have a favorite package manager, you should also make sure you have

pip. - Install tweepy:`

<b>pip install tweepy`</b> - Copy tweet_dumper.py to wherever you keep your scripts. Edit it to include your developer oauth credentials at the top and the username you want to scrape at the bottom. (Thank you to Quartz Things reporter David Yanofsky for the original script.)

- Run it with run `

<b>python scrapername.py`</b>to generate a csv of tweets.

Here is what you’ll find in the resulting CSV:

- id: every tweet has a unique ID that you can use to reconstruct the tweet’s URL. The schema is “https://twitter.com/TWITTERHANDLE/status/IDNUMBER.” For instance, to access Donald Trump’s tweet with ID 805804034309427200, you would head to: https://twitter.com/realDonaldTrump/status/805804034309427200

- created_at: this will give you the date and time the tweet was created. For example 2016-12-01 15:57:15

- favorites: number of times the tweet was favorited — note that if the entry is a retweet, it will not be shown.

- retweet: how often the tweet was retweeted

- retweeted: whether the tweet was a retweet (true) or not (false)

- source: how the tweet was posted, eg. “Twitter for iPhone” or “Twitter Web Client”

- text: the content of the tweet

Parsing the Tweets

The question we wanted to ask about Trump’s tweets was this: is there anything to learn from the URLs that @realDonaldTrump circulated during his campaign?

The question we wanted to ask about Trump’s tweets was this: is there anything to learn from the URLs that @realDonaldTrump circulated during his campaign?

For that we needed the actual URLs. In Google Spreadsheets, we used a regular expression to extract strings that started with “http”. We expanded these links using the node.js expand-url module.

- Install dependencies with `

<b>npm install async expand-url`</b> - Copy your URL array into url-expander.js and run it using this command `

<b>node url-expander.js`</b> - Paste the output into a new csv and merge that with your original spreadsheet.

- Use more Google Spreadsheets regex to zero in on the domain names

We added this data back into the larger spreadsheet and stripped the links down to their root URL, again using Spreadsheets regex capabilities. This finally allowed us to group root URLs and count them using pivot tables.

This is by no means the only (or even the best) way to extract a list of domain names from a corpus of tweets (you could always extract all the links programmatically, using Python or MySQL), but it was our strategy given the time and resources we had.

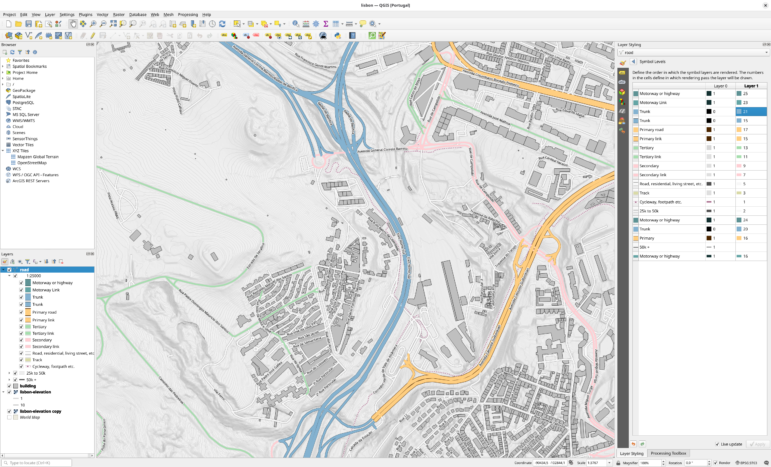

We then modified one of Mike Bostock’s d3.js graphics for our needs, styled it to fit the BuzzFeed look-and-feel, and allowed our audience to explore the data using a zoom function. If you want to learn more about D3, O’Reilly has an excellent primer.

Public Figures and Social Data

The biggest question this project brought up was that of the importance of social media for public figures.

When a president-elect makes official announcements on Twitter, do they become important public documents? If yes, should we be able to access an archive of them tweets, beyond what a private company has decided to provide? Shoot me your thoughts at lam.vo@buzzfeed.com.

This article first appeared on Buzzfeed News and is reproduced here with the author’s permission. It was also cross-posted on Source.

This article first appeared on Buzzfeed News and is reproduced here with the author’s permission. It was also cross-posted on Source.

Lam Thuy Vo is an Open Lab Fellow for BuzzFeed News and is based in San Francisco. She is a German-born Vietnamese reporter who codes, writes, and creates visuals.